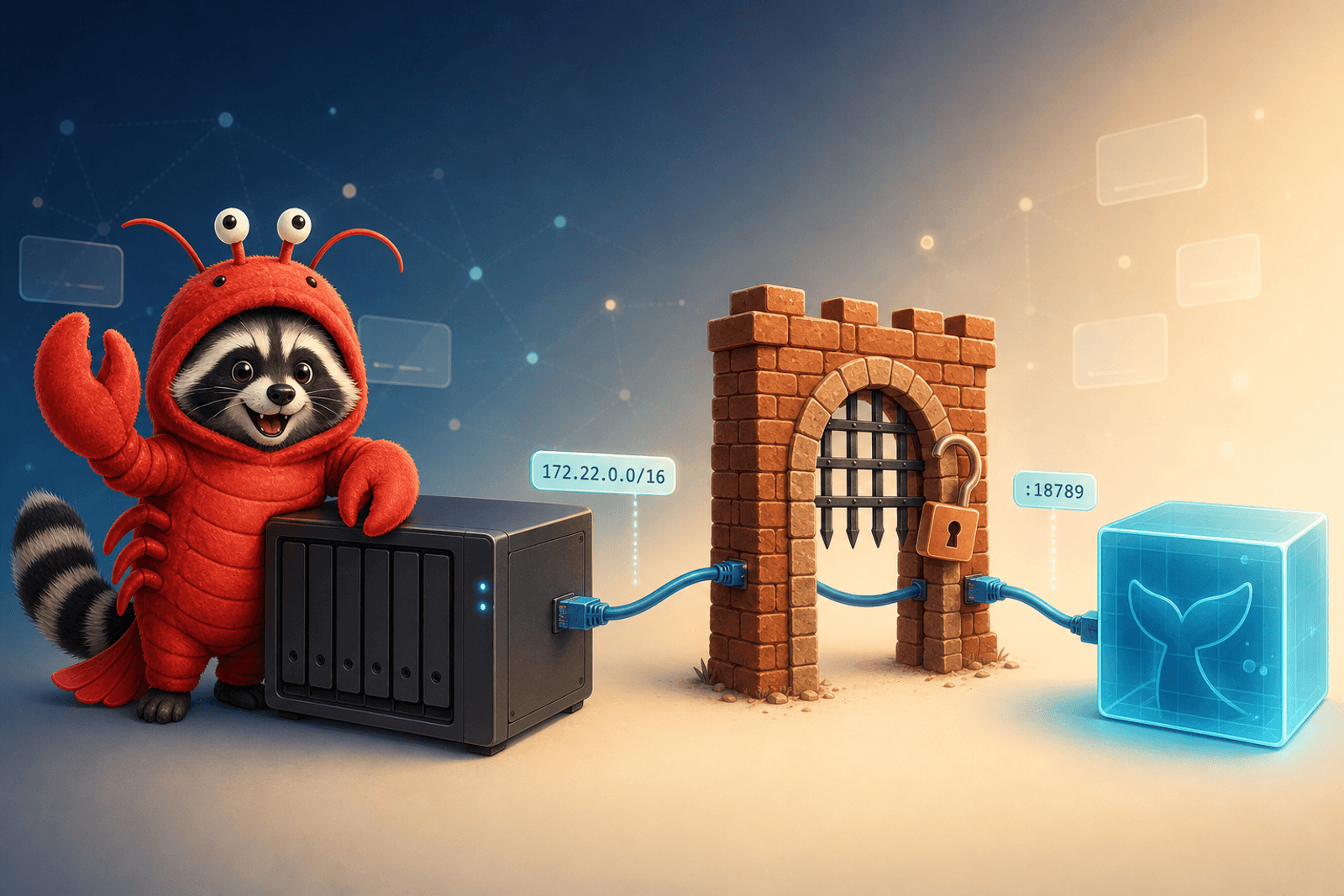

Connecting OpenClaw to GitHub Copilot (OAuth, no API key)

Point your self-hosted OpenClaw instance at GitHub Copilot's models — Claude, GPT-5, Gemini — using one device-flow OAuth login and your existing Copilot subscription. No per-provider API keys.

openclawgithub-copilotoauthself-hostedai